The problem with “explain your answer”

I’m not sure how I missed it when it came out, but Barry Garelick and Katherine Beals’s “Explaining Your Math: Unnecessary at Best, Encumbering at Worst,” which appeared in The Atlantic last month, is a must-read for anyone who wants to understand just how problematic some of Common Core’s assumptions about learning are, particularly as they pertain to requiring young children to explain their reasoning in writing.

(Side note: I’m not sure what’s up with the Atlantic, but they’ve at least partially redeemed themselves for the very, very factually questionable piece they recently ran about the redesigned SAT. Maybe the editors have realized how much everyone hates Common Core by this point and thought it would be in their best interest to jump on the bandwagon, but don’t think that the general public has yet drawn the connection between CC and the Coleman-run College Board?)

I’ve read some of Barry’s critiques of Common Core before, and his explanations of “rote understanding” in part provided the framework that helped me understand just what “supporting evidence” questions on the reading section of the new SAT are really about.

Barry and Katherine’s article is worth reading in its entirety, but one point that struck me as particularly salient.

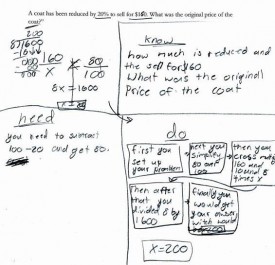

Math learning is a progression from concrete to abstract…Once a particular word problem has been translated into a mathematical representation, the entirety of its mathematically relevant content is condensed onto abstract symbols, freeing working memory and unleashing the power of pure mathematics. That is, information and procedures that have been become automatic frees up working memory. With working memory less burdened, the student can focus on solving the problem at hand. Thus, requiring explanations beyond the mathematics itself distracts and diverts students away from the convenience and power of abstraction. Mandatory demonstrations of “mathematical understanding,” in other words, can impede the “doing” of actual mathematics.

Although it’s not an exact analogy, many of these points have verbal counterparts. Reading is also a progression from concrete to abstract: first, students learn that letters are represented as abstract symbols, and that those symbols correspond to specific sounds, which get combined in various ways. When students have mastered the symbol/sound relationship (decoding) and encoded them in their brains, their working memories are freed up to focus on the content of what they are reading, a switch that normally occurs around third or fourth grade.

Amazingly, Common Core does not prescribe that students compose paragraphs (or flow charts) demonstrating, for example, that they understand why c-a-t spells cat. (Actually, anyone, if you have heard of such an exercise, please let me know. I just made that up, but given some of the stories I’ve heard about what goes on in classrooms these days, I wouldn’t be surprised if someone, somewhere were actually doing that.)

What CC does, however, is a slightly higher level equivalent — namely, requiring the continual citing of textual “evidence.” As I outlined in my last couple of posts, CC, and thus the new SAT, often employs a very particular definition of “evidence.” Rather than use quotations, etc. to support their own ideas about a work or the arguments it contains (arguments that would necessarily reveal background knowledge and comprehension, or lack thereof), students are required to demonstrate their comprehension over and over again by “staying within the four corners of the text,” repeatedly returning it to cite key words and phrases that reveal its meaning — in other words, their understanding of the (presumably) self-evident principle that a text means what it means because it says what it says. As is true for math, entire approach to reading confuses demonstration of a skill with “deep” possession of that skill.

That, of course, has absolutely nothing to do with how reading works in the real world. Nobody, nobody, reads this way. Strong readers do not need to stop repeatedly in order to demonstrate that they understand what they’re reading. They do not need to point to words or phrases and announce that they mean what they mean because they mean it. Rather, they indicate their comprehension by discussing (or writing about) the content of the text, by engaging with its ideas, by questioning them, by showing how they draw on or influence the ideas of others, by pointing out subtleties other readers might miss… the list goes on and on.

Incidentally, I’ve had adults gush to me that their children/students are suddenly acquiring all sorts of higher level skills, like citing texts and using evidence, but I wonder whether they’re actually being taken in by appearances. As I mentioned in my last post, although it may seem that children being taught this way are performing a sophisticated skill (“rote understanding”), they are actually performing a very basic one. I think Barry puts it perfectly when he says that It is as if the purveyors of these practices are saying: “If we can just get them to do things that look like what we imagine a mathematician does, then they will be real mathematicians.”

In that context, these parents’/teachers’ reactions are entirely understandable: the logic of what is actually going on is so bizarre and runs so completely counter to a commonsense understanding of how the world works that such an explanation would occur to virtually no one who hadn’t spent considerable time mucking around in the CC dirt.

To get back to the my original point, though, the obsessive focus on the text itself, while certainly appropriate in some situations, ultimately serves to prohibit students from moving beyond the text, from engaging with its ideas in any substantive way. But then, I suspect that this limited, artificial type of analysis is actually the goal.

I think that what it ultimately comes down to is assessment — or rather the potential for electronic assessment. Students’ own arguments are messier, less “objective,” and more complicated, and thus more expensive, to assess. Holistic, open-ended assessment just isn’t scalable the same way that computerized multiple choice tests are, and choosing/highlighting specific lines of a text is an act that lends itself well to (cheap, automated) electronic grading. And without these convenient types of assessments, how could the education market ever truly be brought to scale?