by Erica L. Meltzer | Aug 21, 2016 | Blog, College Admissions, Parents, Students

After I posted a list of reasons that students should continue to consider passing up the new SAT in favor of the ACT, I received messages from a couple of readers who said that they shared my misgiving about the redesigned test, but that they had a very practical concern regarding that exam: namely, the PSAT and qualification for National Merit Scholarships.

In both cases, they indicated that their children would be dependent on scholarship money to attend college, and that they could not afford to pass up the opportunities offered by the National Merit program.

I confess that this was the last thing on my mind when I wrote the list, but it is a very real concern, and I appreciate having it called to my attention.

I do want to address the issue here, albeit with the caveat that I am not a financial aid expert, and that you should check with guidance counselors and individual colleges because policies and guidelines and vary from school to school.

I’m going to go into a lot more detail below, but in a nutshell: If you are unable to afford college without a full scholarship and are focusing on a group of less selective public universities, primarily in the (Mid)west and South, that offer large amounts of aid to students with high stats in order to boost their rankings, then yes, National Merit can count for a lot. But otherwise, it may have little to no effect on the amount of aid you ultimately receive. (more…)

by Erica L. Meltzer | Mar 22, 2016 | Blog, The New SAT

Apparently I’m not the only one who has noticed something very odd about PSAT score reports. California-based Compass Education has produced a report analyzing some of the inconsistencies in this year’s scores.

The report raises more questions than it answers, but the findings themselves are very interesting. For anyone who has the time and the inclination, it’s well worth reading.

Some of the highlights include:

- Test-takers are compared to students who didn’t even take the test and may never take the test.

- In calculating percentiles, the College Board relied on an undisclosed sample method when it could have relied on scores from students who actually took the exam.

- 3% of students scored in the 99th percentile.

- In some parts of the scale, scores were raised as much as 10 percentage points between 2014 and 2015.

- More sophomores than juniors obtained top scores.

- Reading/writing benchmarks for both sophomores and juniors have been lowered by over 100 points; at the same time, the elimination of the wrong-answer penalty would permit a student to approach the benchmark while guessing randomly on every single question.

by Erica L. Meltzer | Jan 17, 2016 | Blog, The New SAT

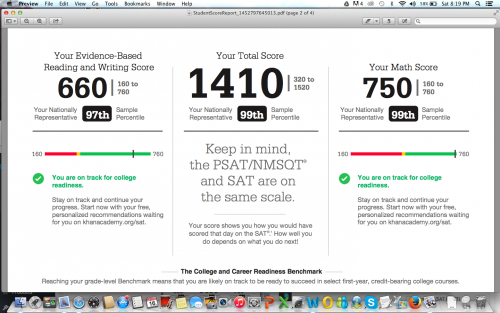

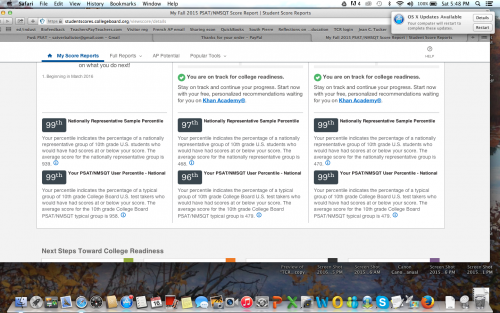

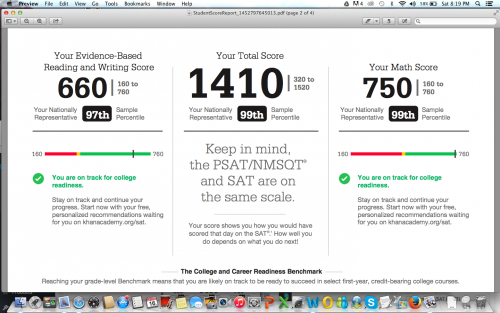

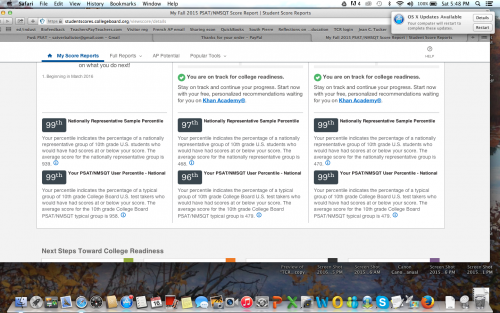

A couple of posts back, I wrote about a recent Washington Post article in which a tutor named Ned Johnson pointed out that the College Board might be giving students an exaggeratedly rosy picture of their performance on the PSAT by creating two score percentiles: a “user” percentile based on the group of students who actually took the test; and a “national percentile” based on how the student would rank if every 11th (or 10th) grader in the United States took the test — a percentile almost guaranteed to be higher than the national percentile.

When I read Johnson’s analysis, I assumed that both percentiles would be listed on the score report. But actually, there’s an additional layer of distortion not mentioned in the article.

I stumbled on it quite by accident. I’d seen a PDF-form PSAT score report, and although I only recalled seeing one set of percentiles listed, I assumed that the other set must be on the report somewhere and that I simply hadn’t noticed them.

A few days ago, however, a longtime reader of this blog was kind enough to offer me access to her son’s PSAT so that I could see the actual test. Since it hasn’t been released in booklet form, the easiest way to give me access was simply to let me log in to her son’s account (it’s amazing what strangers trust me with!).

When I logged in, I did in fact see the two sets of percentiles, with the national, higher percentile of course listed first. But then I noticed the “download report” button, and something occurred to me. The earlier PDF report I’d seen absolutely did not present the two sets of percentiles as clearly as the online report did — of that I was positive.

So I downloaded a report, and sure enough, only the national percentiles were listed. The user percentile — the ranking based on the group students who actually took the test — was completely absent. I looked over every inch of that report, as well as the earlier report I’d seen, and I could not find the user percentile anywhere.

Unfortunately (well, fortunately for him, unfortunately for me), the student in question had scored extremely well, so the discrepancy between the two percentiles was barely noticeable. For a student with a score 200 points lower, the gap would be more pronounced. Nevertheless, I’m posting the two images here (with permission) to illustrate the difference in how the percentiles are reported on the different reports.

Somehow I didn’t think the College Board would be quite so brazen in its attempt to mislead students, but apparently I underestimated how dirty they’re willing to play. Giving two percentiles is one thing, but omitting the lower one entirely from the report format that most people will actually pay attention to is really a new low.

I’ve been hearing tutors comment that they’ve never seen so many students obtain reading scores in the 99th percentile, which apparently extends all the way down to 680/760 for the national percentile, and 700/760 for the user percentile. Well…that’s what happens when a curve is designed to inflate scores. But hey, if it makes students and their parents happy, and boosts market share, that’s all that counts, right? Shareholders must be appeased.

Incidentally, the “college readiness” benchmark for 11th grade reading is now set at 390. 390. In contrast, the I confess: I tried to figure out what that corresponds to on the old test, but looking at the concordance chart gave me such a headache that I gave up. (If anyone wants to explain it me, you’re welcome to do so.) At any rate, it’s still shockingly low — the benchmark on the old test was 550 — as well as a whopping 110 points lower than the math benchmark. There’s also an “approaching readiness” category, which further extends the wiggle room.

A few months back, before any of this had been released, I wrote that the College Board would create a curve to support the desired narrative. If the primary goal was to pave the way for a further set of reforms, then scores would fall; if the primary goal was to recapture market share, then scores would rise. I guess it’s clear now which way they decided to go.

by Erica L. Meltzer | Jan 10, 2016 | Blog, The New SAT

Apparently I’m not the only one who thinks the College Board might be trying to pull some sort of sleight-of-hand with scores for the new test.

In this Washington Post article about the (extremely delayed) release of 2015 PSAT scores, Ned Johnson of PrepMatters writes:

Here’s the most interesting point: College Board seems to be inflating the percentiles. Perhaps not technically changing the percentiles but effectively presenting a rosier picture by an interesting change to score reports. From the College Board website, there is this explanation about percentiles:

Percentiles

A percentile is a number between 0 and 100 that shows how you rank compared to other students. It represents the percentage of students in a particular grade whose scores fall at or below your score. For example, a 10th-grade student whose math percentile is 57 scored higher or equal to 57 percent of 10th-graders.

You’ll see two percentiles:

The Nationally Representative Sample percentile shows how your score compares to the scores of all U.S. students in a particular grade, including those who don’t typically take the test.

The User Percentile — Nation shows how your score compares to the scores of only some U.S. students in a particular grade, a group limited to students who typically take the test.

What does that mean? Nationally Representative Sample percentile is how you would stack up if every student took the test. So, your score is likely to be higher on the scale of Nationally Representative Sample percentile than actual User Percentile.

On the PSAT score reports, College Board uses the (seemingly inflated) Nationally Representative score, which, again, bakes in scores of students who DID NOT ACTUALLY TAKE THE TEST but, had they been included, would have presumably scored lower. The old PSAT gave percentiles of only the students who actually took the test.

For example, I just got a score from a junior; 1250 is reported 94th percentile as Nationally Representative Sample percentile. Using the College Board concordance table, her 1250 would be a selection index of 181 or 182 on last year’s PSAT. In 2014, a selection index of 182 was 89th percentile. In 2013, it was 88th percentile. It sure looks to me that College Board is trying to flatter students. Why might that be? They like them? Worried about their feeling good about the test? Maybe. Might it be a clever statistical sleight of hand to make taking the SAT seem like a better idea than taking the ACT? Nah, that’d be going too far.

I’m assuming that last sentence is intended to be taken ironically.

One quibble. Later in the article, Johnson also writes that “If the PSAT percentiles are in fact “enhanced,” they may not be perfect predictors of SAT success, so take a practice SAT.” But if PSAT percentiles are “enhanced,” who is to say that SAT percentiles won’t be “enhanced” as well?

Based on the revisions to the AP exams, the College Board’s formula seems to go something like this:

(1) take a well-constructed, reasonably valid test, one for which years of data collection exists, and declare that it is no longer relevant to the needs of 21st century students.

(2) Replace existing test with a more “holistic,” seemingly more rigorous exam, for which the vast majority of students will be inadequately prepared.

(3) Create a curve for the new exam that artificially inflates scores.

(4) Proclaim students “college ready” when they may be still lacking fundamental skills.

(5) Find another exam, and repeat the process.